The Hyper Flow 956153205 Fusion Node is described as a real-time data orchestrator for distributed systems. It emphasizes deterministic scheduling, strong isolation, and pipelined throughput. The design supports edge and data-center deployments through adaptable adapters and consistent schemas. It addresses upgrades and recovery with explicit reliability practices. Tradeoffs are managed, aligning with organizational risk appetite. The question remains: how do these components perform under varied workloads and operational constraints, and what compromises emerge as scale increases?

What the Hyper Flow 956153205 Fusion Node Is Built For

The Hyper Flow 956153205 Fusion Node is engineered to optimize real-time data integration and high-throughput task orchestration across distributed systems.

It addresses insight gaps by revealing latent correlations and timing patterns, while systematically confronting integration challenges through adaptable adapters, consistent schemas, and deterministic flows.

This design emphasizes clarity, autonomy, and freedom for engineers pursuing scalable, resilient data coordination.

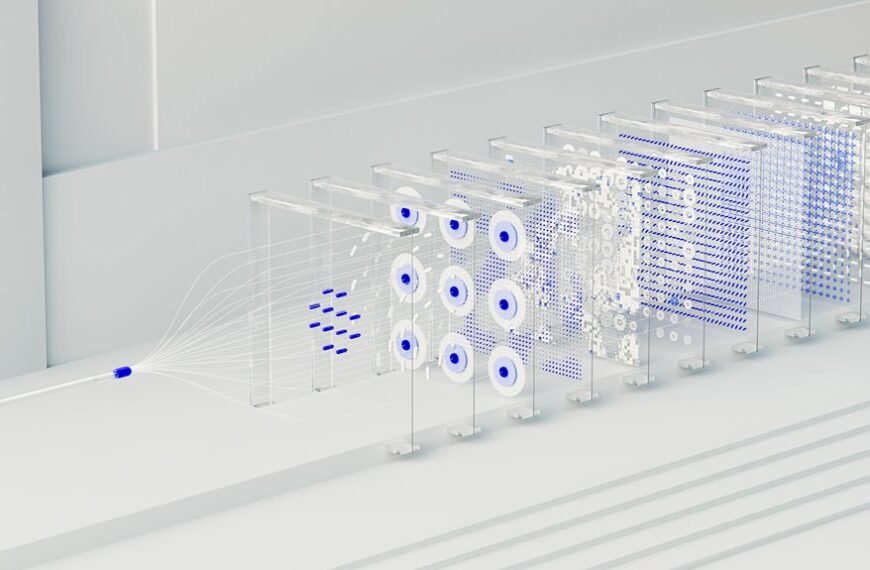

Core Architecture and What Makes It Fast

What underpins the Core Architecture and the speed of the Hyper Flow 956153205 Fusion Node is a deliberate synthesis of modular components, each optimized for low-latency data paths and deterministic scheduling. The design emphasizes predictable pacing, resource isolation, and pipelined throughput. Two word discussion ideas emerge, guiding Subtopic relevance while inviting curiosity, precision, and freedom in evaluating performance, scalability, and resilience.

Practical Deployment Scenarios and Use Cases

Practical deployment scenarios for the Hyper Flow 956153205 Fusion Node center on predictable, scalable integration within edge and data-center ecosystems, where deterministic latency and robust isolation are essential.

The analysis identifies deployment scalability as a core lever, enabling modular rollouts, cross-domain coordination, and controlled upgrades.

Latency optimization emerges as a measurable discipline, guiding topology, scheduling, and fault containment without sacrificing flexibility or autonomy.

Tradeoffs, Tradeproofing, and How to Decide If It Fits Your Infra

Tradeoffs, tradeproofing, and fit assessment hinge on balancing performance guarantees, operational burden, and total cost of ownership across use cases. The evaluation isolates reliability, scalability, and maintenance overhead, then maps these to organizational risk appetite.

Tradeoffs emerge between latency and resilience, while tradeproofing focuses on verification, redundancy, and recovery procedures.

Decision criteria center on alignment with infra goals and permissible risk.

Conclusion

The Hyper Flow 956153205 Fusion Node embodies precise, modular orchestration for high-throughput, low-latency data pathways. Its deterministic scheduling, strong isolation, and pipelined throughput enable scalable edge-to-cloud deployments with robust upgrade and recovery strategies. In a hypothetical financial operations case, a cross-region analytics pipeline maintains sub-50ms latency while isolating risk-bound workloads, ensuring continuity during node failures. The decision to adopt hinges on explicit reliability requirements and clear tradeproofing, balancing speed with maintainable resilience.