Hyper Node 931815261 Neural Prism presents a modular, high-density processing framework designed for scalable, reconfigurable computation. It distributes tasks across autonomous units, leveraging dynamic routing and ethereal topology to optimize flow and resource reallocation. Recursive fusion aims to reduce latency while preserving determinism, with parallel pathways and synchronized inter-node signaling. The architecture emphasizes fault containment, staged deployment, and auditable governance. Its potential impact invites rigorous validation and ethical data stewardship, but practical integration questions remain, awaiting structured, objective evaluation.

What Hyper Node 931815261 Neural Prism Is

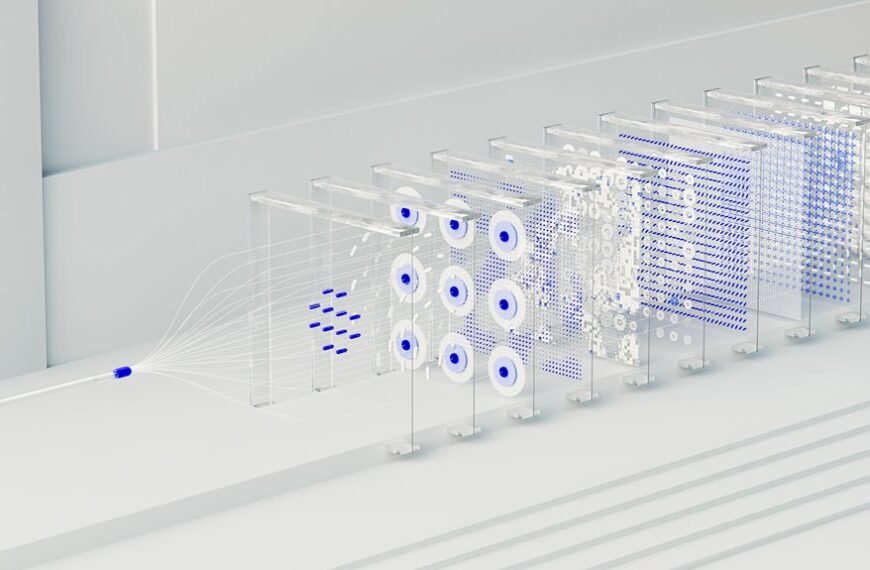

The Hyper Node 931815261 Neural Prism is a conceptual framework that integrates high-density neural processing with modular, reconfigurable nodes.

It delineates independent processing units, enabling scalable composition without centralized bottlenecks.

Hyper Node emphasizes modular exchange, while Neural Prism highlights dynamic routing and parallel pathways.

The design supports freedom-driven iteration, rigorous validation, and disciplined experimentation within configurable, transparent architectures.

How the Neural Prism Architecture Works

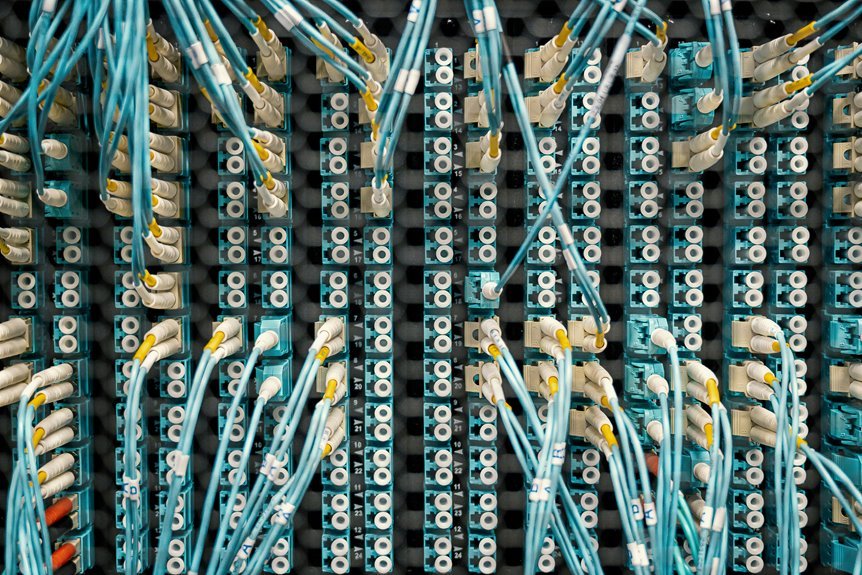

The Neural Prism Architecture operates by distributing computation across modular, reconfigurable nodes that route data and tasks through parallel pathways. It analyzes flow with an ethereal topology, enabling dynamic reallocation of resources. Recursive fusion integrates intermediate results, reducing latency and preserving determinism. Inter-node coordination is achieved through synchronized signals, while fault containment preserves throughput, ensuring scalable, transparent operation and freedom to adapt to diverse workloads.

Use Cases and Real-World Impact

Analyzing practical deployments, the Neural Prism architecture demonstrates tangible impact across diverse sectors by enabling dynamic resource allocation, low-latency processing, and scalable task routing.

It supports disaster recovery workflows, accelerates real-time analytics, and enhances autonomous operations while maintaining governance.

Ethical implications arise regarding data stewardship, transparency, and bias mitigation, demanding rigorous validation, auditable decisions, and principled, freedom-respecting deployment.

How to Evaluate and Deploy the Prism in Your Stack

How can organizations systematically assess the Prism’s fit within existing architectures and governance models, then execute a controlled deployment that minimizes risk while maximizing measurable value?

The evaluation framework quantifies interoperability, data privacy, and model governance across pipelines, controls, and audits.

Deployment proceeds in staged pilots, strict rollback, and continuous monitoring, ensuring measurable value, compliance, and freedom to adapt within a robust, transparent innovation ecology.

Conclusion

In summary, the Hyper Node 931815261 Neural Prism embodies a modular, scalable approach to neural processing, enabling dynamic resource reallocation and low-latency fusion across distributed nodes. Its ethereal topology and fault-containment strategies promote predictable performance and rigorous governance, while staged deployment and continuous monitoring ensure auditable stewardship. Like a well-choreographed orchestra, the Prism harmonizes parallel pathways into coherent computation, yielding robust, ethics-aware outcomes that scale with architectural clarity and disciplined validation.