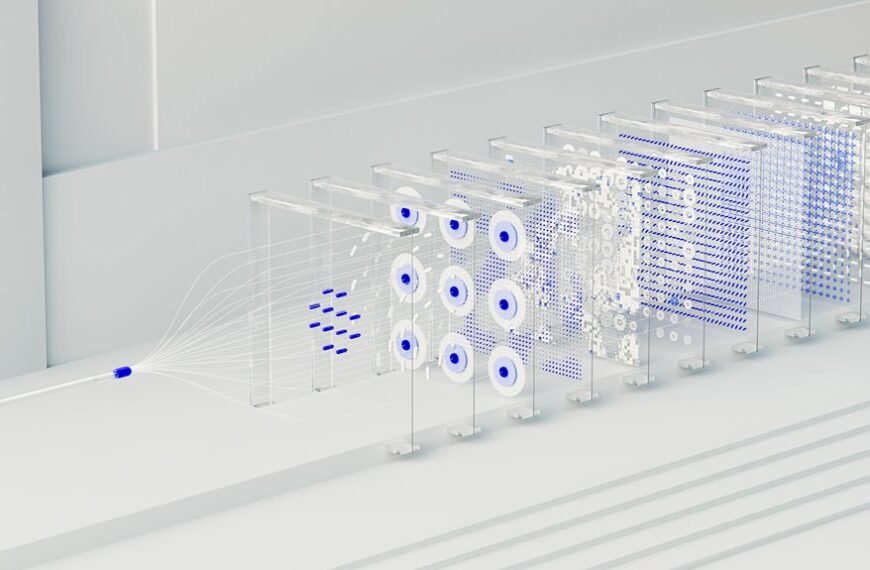

The Stellar Node 695673972 Performance Ladder offers a structured framework to assess node capability through measurable metrics. It combines throughput, latency, and uptime to gauge reliability, with practical tuning guidance for CPU, memory, threads, gas limits, and batch sizes. The approach translates technical results into user-centric UX signals aligned with governance thresholds. It enables repeatable evaluations under varying network conditions, prompting a careful look at how decisions might evolve as targets shift and stakes rise. Answers await those who probe further.

What the Stellar Node 695673972 Ladder Measures

The framework enables independent assessment, supporting transparent benchmarking and informed decisions about node deployment, maintenance priorities, and capability alignment with network demands.

How Throughput, Latency, and Uptime Stack Up

How do throughput, latency, and uptime interrelate to define a node’s operational profile within the Stellar network? Throughput reflects transaction handling capacity; latency measures response time; uptime signals availability. Together, they define reliability and efficiency. The analysis emphasizes throughput optimization and latency awareness, showing trade-offs, thresholds, and where performance targets align with network conditions and governance expectations for resilient participation.

Practical Tuning Tips for Validators and Operators

Operators can apply concrete adjustments to balance throughput, latency, and uptime in line with observed network conditions. Validators optimize CPU and memory allocations, tuning worker threads and gas limits to align with throughput benchmarks. Operators monitor latency distribution, adjusting batch sizes and timeout thresholds to minimize tail latency while preserving reliability. Documentation emphasizes repeatable configurations, version control, and rollback procedures.

Interpreting Results: Turning Metrics Into User Experience

Interpreting results involves translating raw performance metrics into actionable user-facing implications.

The analysis distills throughput optimization and latency profiling into concrete UX signals, enabling operators to assess impact on end-user perception.

By mapping metrics to workflows, stakeholders identify performance bottlenecks, prioritize repairs, and quantify improvements.

Clear, repeatable evaluation ensures decisions align with user freedom and system reliability.

Conclusion

In the Stellar Node 695673972 ladder, a lighthouse is set on a shifting sea. Throughput, latency, and uptime are its beacons, guiding ships of transactions through reefs of congestion and fog of delays. Validators tune their engines, adjust their sails, and trim their quitars to steady the course. As conditions change, the beacon adapts, signaling safe passage or the need to retreat to calmer waters, until governance thresholds align and the voyage proceeds with confidence.