Puwipghooz8.9 Edge is a distributed, low-latency framework designed to process data locally across heterogeneous nodes. It enables scalable orchestration, lightweight runtimes, and edge caching while preserving secure telemetry and fault-tolerant messaging. This approach offers reduced latency, localized decisioning, and adaptive resource allocation, supported by data-driven performance evidence. For developers, core concerns include provenance, modular edge workflows, and governance-aligned deployment, balanced against complexity and security trade-offs in autonomous, real-time scenarios. The implications warrant further examination as stakeholders weigh fit.

What Is puwipghooz8.9 Edge and Why It Matters

Puwipghooz8.9 Edge is a conceptual framework or technology whose significance stems from its role in advancing performance, scalability, and reliability in its intended domain.

The analysis focuses on measurable effects, comparative benchmarks, and context-dependent constraints.

This objective evaluation emphasizes data-driven claims, reproducibility, and fair assessment of benefits.

puwipghooz8.9 edge, edge technology discussion, remains grounded in evidence.

Core Features That Power the Edge Experience

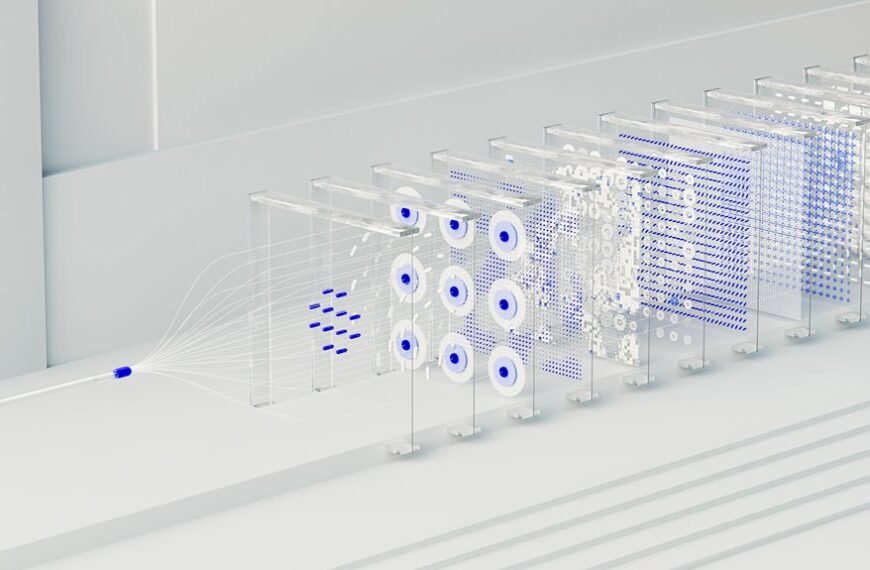

What core features principally drive the edge experience, enabling low latency, high resilience, and scalable deployment across distributed environments? The core features include distributed orchestration, lightweight runtime environments, edge caching, secure telemetry, and fault-tolerant messaging.

Evidence shows Edge scalability and Latency optimization emerge from localized processing, consistent synchronization, and adaptive resource allocation, supporting resilient, scalable deployments across heterogeneous networks with minimal coordination overhead.

Use Cases and Best Practices for Developers and Teams

Given the edge’s emphasis on distributed processing and resource-locality, developers and teams should align use cases with autonomous, low-latency requirements, emphasizing data locality, real-time decisioning, and resilient coordination across heterogeneous nodes.

Edge workflows enable modular deployment, while latency optimization, data governance, and security posture guide architectural choices, metrics, and compliance.

Teams should document reproducible patterns, monitor provenance, and enforce principled data access across environments.

Benefits, Trade-Offs, and How to Decide if It Fits Your Project

Edge computing emphasizes distributed processing and data locality, making it important to weigh benefits against trade-offs and to assess fit against project requirements established in prior considerations such as latency, resilience, and governance. Benefits include responsiveness and localization; trade-offs involve complexity and security.

Decision hinges on edge deployment viability and organizational goals, with emphasis on latency optimization, cost, and governance alignment to project constraints.

Conclusion

The analysis suggests that puwipghooz8.9 Edge presents a careful balance of advantages and constraints. While its distributed, low-latency architecture supports localized decisioning and resilient messaging, implementation complexity and security considerations warrant measured governance and provenance controls. When aligned with modular workflows and adaptive resource allocation, the architecture can yield meaningful performance improvements. However, teams should weigh trade-offs against project scope and risk tolerance, proceeding with evidence-based, incremental adoption to avoid overcommitment.